By Chris Ullrich, CTO at Immersion

Over the last two months, I introduced the haptic stack – a conceptual framework that we use at Immersion to think about the key technology components of an engaging haptic experience – and described the hardware layer. As a quick refresher, the stack consists of three key layers: design, software, and hardware (see this post for more detail). These layers work together in a highly interdependent way to create a tactile experience that is in harmony with the overall UX of a product or experience. Product designers need to think carefully about the trade-offs in all three layers when designing a product, to deliver a valuable and delightful end-user experience.

This month we’re going to delve into the middle layer – the software layer. We’ll primarily focus on software that is used to build products and applications and leave application-level software for a later post.

The software layer interfaces with the driver electronics (below) as well as with applications (above) that utilize haptics.

Effect Representations

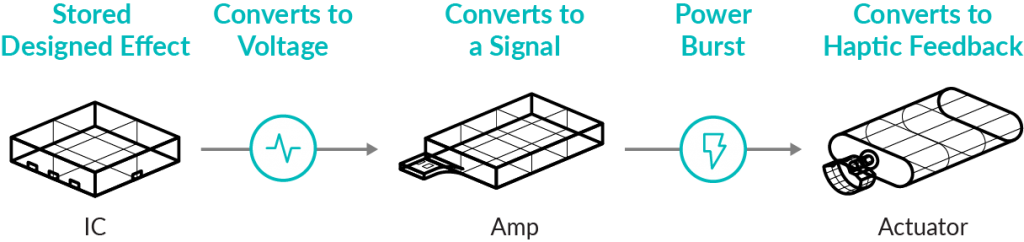

The software layer is responsible for orchestrating the haptic hardware by generating signals that can be rendered on that hardware in response to application state or user interactions. It is important to think about the representation of these signals to understand the choices and capabilities available as related to system-level software.

At the lowest level, it is common for haptic driver ICs to provide some type of buffer or other commands in on-chip storage that can be triggered, and which will result in a voltage being generated. The data stored in these buffers is normally very close to the signal that is sent to the amplifier and is always driver IC-specific. This capability is typically used for haptic products that don’t have an operating system since the buffer can be triggered directly with an I2C signal or other low-level commands. Normally these effects are used for button-like effects because this configuration has very low latency and limited dependence on software state. The effects themselves are also specific to the actuator and driver present in the system. While in this context, the capabilities of haptic effects are highly dependent on the hardware choice, upgrading to better haptic hardware doesn’t necessarily equate to more capabilities. With a higher graded actuator and higher performing driver IC, the software layer plays a greater role in leveraging the capabilities of the haptic hardware.

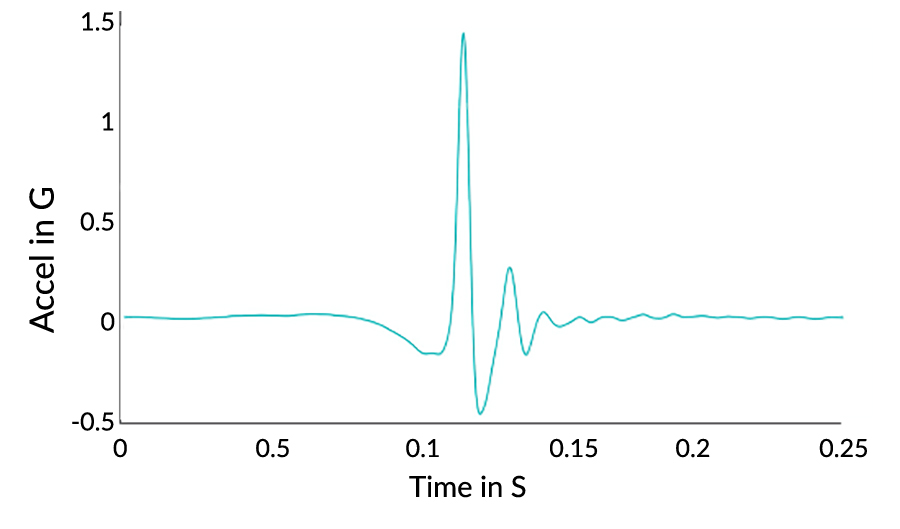

A more general representation of haptic effects is a timed series of amplitude values with a regular sampling rate. Operating systems such as Android and iOS can digest buffers of such signal data, typically with a 1ms sampling rate, and render them to the available haptic hardware. This format is low level, but it is easy to generate using audio tools like Audacity. A key challenge with this effect encoding is that the haptic experience that will be generated will vary widely in practice due to mechanical variance at the motor level. As I noted in last month’s blog, every haptic actuator has distinct and different performance characteristics. With a basic signal level representation, these variances must be taken into account when the effects are created. For products that all use the same motor, this can be acceptable. Still, for application software that is intended to run on different devices (e.g., different Android models), the resulting experience will vary from ‘exactly as intended’ to ‘noisy and confusing.’

Another approach to haptic effect representation is to capture the design intent of the effect using an abstract signal representation. Abstract signal representations are conceptual by design and must be interpreted by the OS software and then used to synthesize a signal level effect (usually at runtime). The synthesis step can be accomplished by transforming the conceptual effect description using a motor model that represents the actual hardware on the device. This approach effectively decouples and abstracts the hardware dependence from the effect design activity and is an important component of a general haptic software solution, particularly for markets that have applications that will run on different hardware.

Some Important APIs

There are a few important in-market haptic software APIs to consider, but, notably, there is a wide variance in approach and sophistication. This variance likely represents a key barrier for application developers who want to make use of haptics since they will normally need to reimplement/test their application on each platform and hardware model to ensure consistency of experience.

Core Haptics (iOS)

Core Haptics was released in mid-2019 and is one of the most sophisticated in-market examples of a haptic API. Core Haptics uses an abstraction model that enables effects to be specified using ADSR (see Wikipedia) envelopes. These envelopes are parsed at runtime within iOS and used to synthesize a motor-specific signal that is sent to the amplifier and ultimately to the actuator. Core Haptics is a rich and expressive API, and I highly recommend reading this blog post at Lofelt, which provides a great technical overview.

Android Vibrate (Google)

Android has supported vibration control since at least version 2.0. The API is documented on this website. Android doesn’t support effect abstraction and instead provides only a low-level signal interface, which is represented as a buffer of 8-bit amplitudes that are sampled at 1ms. Although this API is very capable, it doesn’t provide any hardware abstraction to developers and thus suffers from the issues described above.

Open XR 1.0 (Khronos)

Last year, Khronos Group released OpenXR 1.0 (link), which includes support for haptic feedback devices. This API is targeted at XR and gaming use cases and is intended to provide consistent, low-level hardware abstraction that enables high-performance applications. The effect encoding in OpenXR is a list of individual vibration effects, each of which has a fixed duration, frequency, and amplitude. Conceptually, this API is a bit more sophisticated than the signal level API in Android. Still, it doesn’t meaningfully abstract the tight hardware dependence that is a hallmark of haptic feedback and will likely suffer from the same inconsistencies present in Android if the same effect lists are played on devices with different actuators.

Software Use Cases

Given that background, let’s look at some use cases of these haptic APIs and discuss the capabilities and shortcomings of each.

Mobile and Automotive Confirmation Effects

Short, high amplitude effects are the hallmark of button replacement use cases. Recall the quality of the Apple haptic home button when it was first released. Many users were convinced that it was a real button. This type of use case is normally supported as a driver IC-level effect buffer. The advantage of this is that the system has very low latency. Since buttons are normally static, it is acceptable to have limited software control over the effect itself.

Mobile Gaming Effects

Mobile games have traditionally not made extensive use of the built-in haptic capabilities of mobile devices. Now that Apple has enabled developers to create haptic effects in their apps, we expect this to change. The ADSR abstraction introduced in Core Haptics, along with tight integration with audio playback, is ideal for haptic game effect design. This API enables developers to create haptic and audio effects together and manage them as similar assets. It would be nice to see similar functionality come to Android devices in Android 11.

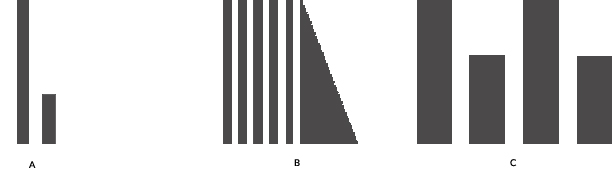

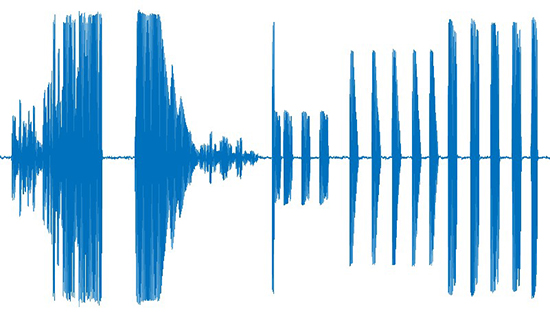

A. A short double pulse with a finite space between the pulses. The second pulse is much lower in magnitude than the first.

B. A quick repeating pattern of pulses that ends with a long decay on the last pulse

C. Four separate pulses with alternating strong and moderate magnitudes

Game Controller Effects

The new PS5 Dual Sense controller will have both HD haptics and active trigger feedback. Both of these capabilities are sophisticated and have a lot of potential for game experiences. However, it is not clear that the OpenXR API is sufficient to enable this platform, and Sony has not (publicly) released any developer tools. It would be ideal if an abstraction-level effect encoding and API existed to facilitate widespread and cross-platform usage of advanced haptics in the next generation of console and PC gaming.

An explosion followed by gunshots

Summary

This month we reviewed the software layer of the haptic stack. As with the hardware layer, there are a lot of disparate choices and approaches, and each one impacts the ability of a product to fulfill its haptic features and requirements. This layer is also the layer that could benefit from enhanced industry standardization both in terms of APIs and in terms of effect encodings. Next month, we’ll look at the top of the stack and examine how haptic experiences are designed and how this ties together the entire stack. We’ll see you then!

Related articles: